Different techniques for interpreting the Decisions of Convolution Neural Network using Tensorflow and Keras Codes for the Class Activation Map, Gradient weighted Class Activation Map, pixel-space saliency maps and Layer CAM.

Layer CAM

Layer CAM is the technique of visualizing the gradient weighted feature maps of the shallow layers of the Convolutional neural networks to identify the regions of the input used for the target predictions. It is the generalized version of the Gradient CAM which applied to the final convolution layer only.

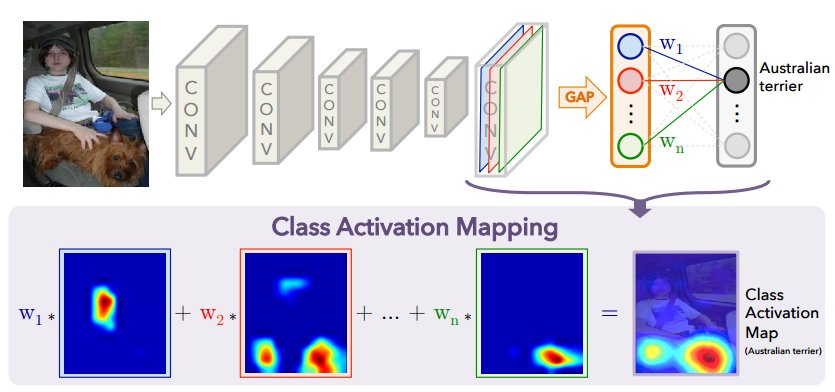

The Class Activation Map Technique

The CAM framework is published in the research paper: http://arxiv.org/pdf/1512.04150.pdf

The Pixel-space Saliency Map Technique

The saliency map for an input image is generated corresponding to the output label of interest. The procedure followed is from the paper "Deep Inside Convolutional Networks". The saliency map generation is inspired by the basics of back propagation algorithm, which states that the deltas obtained at a layer L equal the gradient of the loss incurred by the subgraph (subnet) below L with respect to the outputs at L. Thus, backpropagating till the input data layer will yield us the gradient of the loss incurred by the whole CNN with respect to the input itself, thereby providing us the importance / saliency over the input image.